It is not cost effective to store small amount of data and 1TB is right on the threshold when most solutions are least optimal.

That cloud storage comparison is a screenshot of a source data for my very old blog post (2018) Cloud Storage Pricing | Trinkets, Odds, and Ends that was since updated here Cloud Storage Pricing, Revisited | Trinkets, Odds, and Ends and by now became obsolete again, but that screenshot seems to have taken on life of its own. Thank you for any details you can provide. I will try to find this explanation again but can someone enlighten me here? I’m about to do some speed test but still wondering if I schedule this first backup to run only nightly, or throttled through the day, can Duplicacy deal with failures or unfinished backups?Īnd lastly, somewhere on this forum I read that writing to a local repository before sending to cloud is a better practice. I recently got fiber and at this time I have 60Mb up.

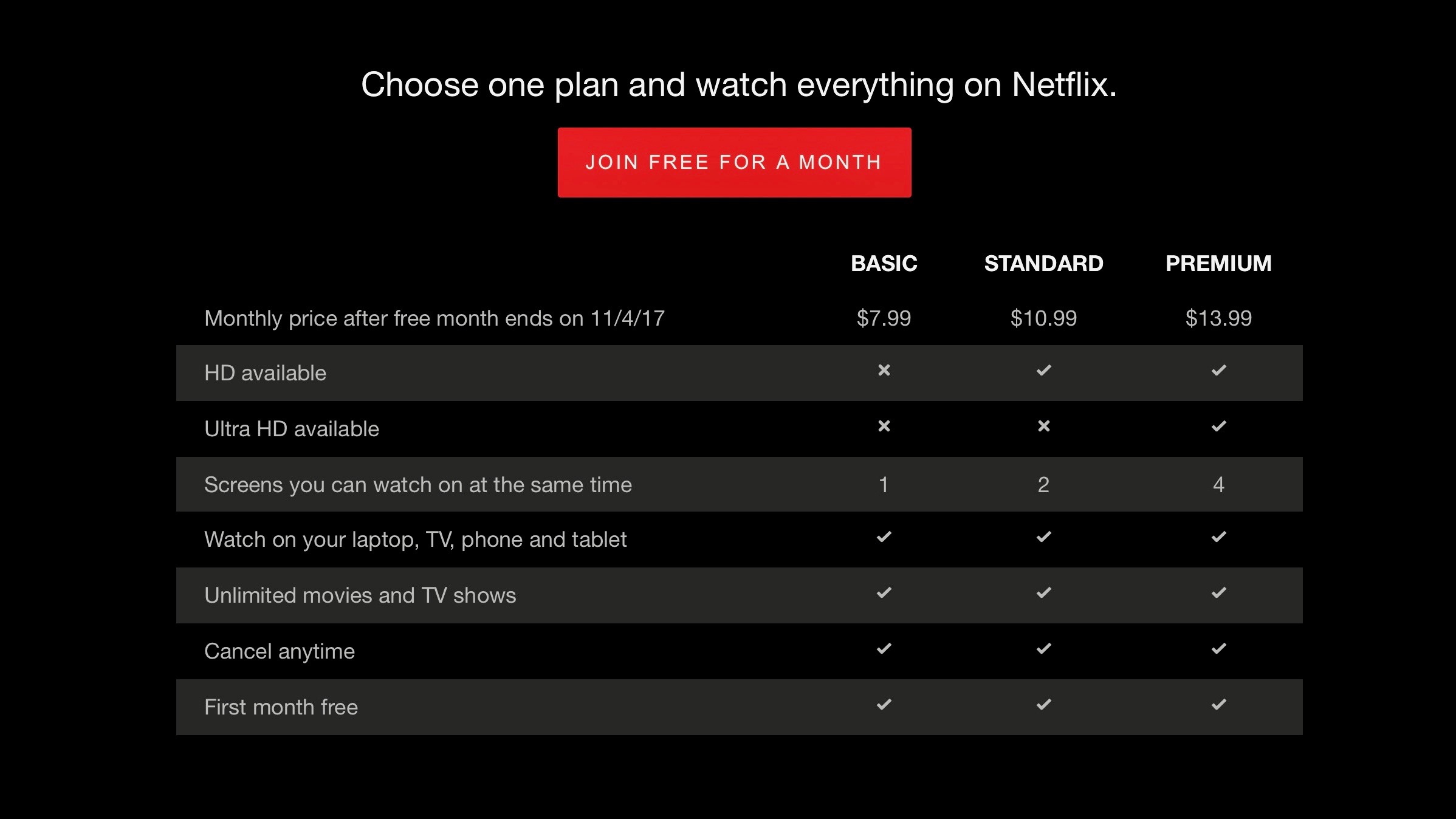

Can anyone comment on cost? I’m aiming to keep my cost down to <$45/year for 1TB storage (no access). So my first question is somewhere on this forum I found this chart: Where did this come from? I’ve worked some s3 and Azure calculators and found that maybe Azure is in fact less expensive for what I’m trying to do. Nonetheless I will probably never need to access unless my house burns down (and since I’m in Northern California this is becoming a possibility almost every year). The other 15% is a mix of rarely changes, maybe 5% changes daily or weekly. Of this 1TB of data, approx 85% could be very cold (it never changes, I never access it). I already have reliable lan backup but want a solution for complete disaster/recovery. I need to backup 1TB of data to cloud storage. My requirements include: minimal complexity, good support, storage agnostic, local encryption. I’m evaluating numerous cloud backup solutions.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed